Sora is impressive (to say the least), but to take AI cinema to the next level is to give back control, not to go down the route of an AGI and do everything for us from a single prompt.

As a filmmaker I’ve made a blueprint of what I need from tools like Sora as they become part of the professional film landscape.

Real-time control

Filmmaking is a details orientated business. Production is a realtime endeavour. You have cast and crew living in the moment, immersed in the script, making split-second decisions creatively all day, bouncing ideas off each other.

How this will work in the virtual world of AI filmmaking is going to require a realtime aspect to the world engine.

This will most likely depend on third party AI models and AI tools connected to a core engine like Sora via API calls.

With one of the current video tools Runway, you can’t make adjustments in real-time as the scene unfolds, you only get to choose from a bunch of takes afterwards.

So the realtime decisions are needed and these are very computationally intensive, and I think initially these cutting edge AI production suites will run into the tens of thousands of dollars per month to run, and require real expertise and investment in specialist hardware to assemble, but depending on how elaborate the shot, it’s still going to be a fraction of the cost of using a soundstage, or virtual production studio, with green screen VFX and real locations with real actors.

Alongside the decisions you make deliberately and slowly after reviewing dailies and things like that, or in the edit, sitting and waiting for AI to generate a take and then another, in an iterative way, will probably still be part of AI filmmaking, but you need that real-time environment of decision-making alongside it too.

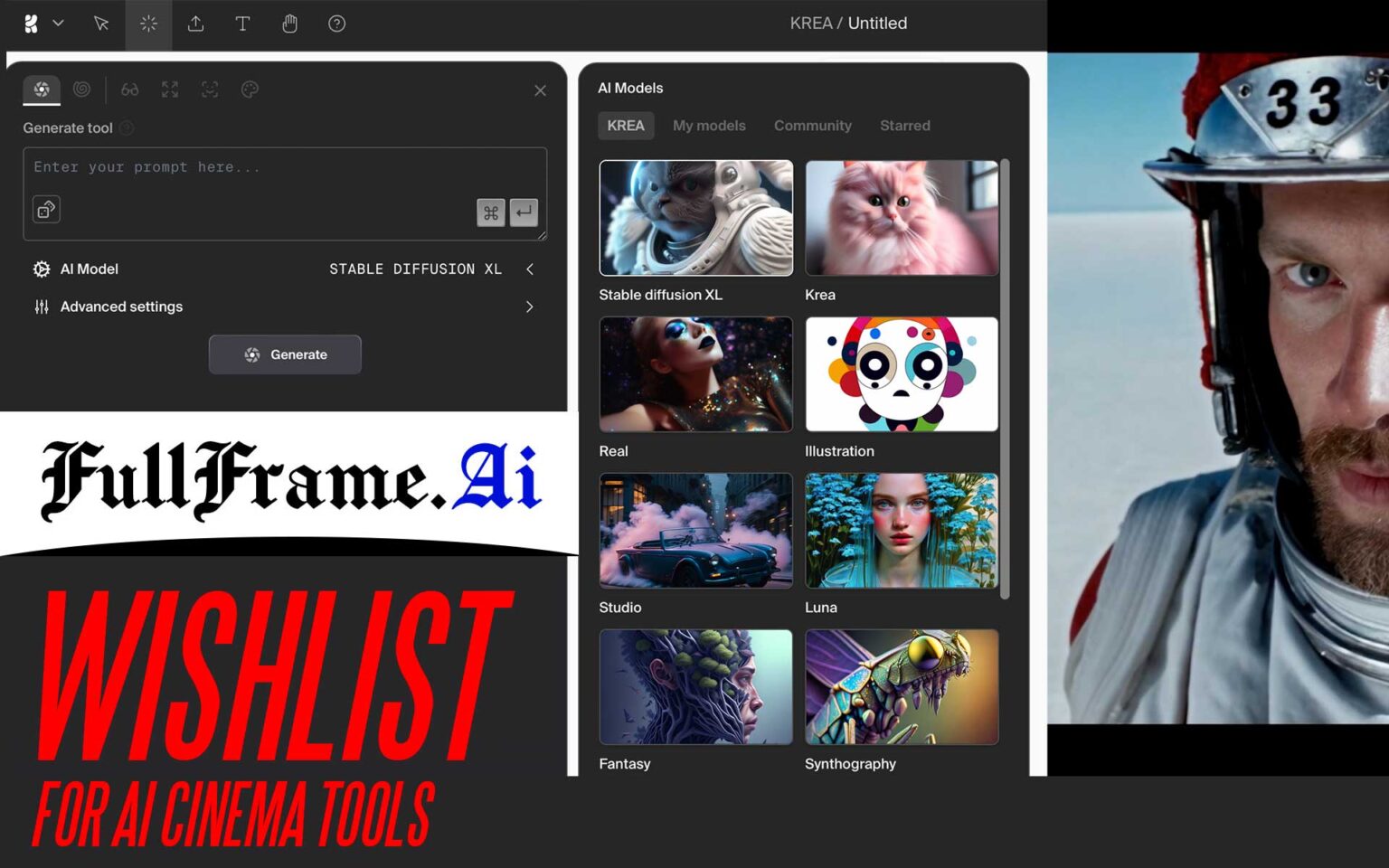

Already there are real-time tools for AI content generation like Krea.ai which you can see an example of further down the page.

It will come, and is a matter of scaling up compute power.

New Sora videos just dropped on TikTok by OpenAI team and people are going crazy.

Nothing in this video is real🤯

6 wild new examples: 🧵👇

1. Labrador Hacker pic.twitter.com/HBFgpH4kG4

— Min Choi (@minchoi) February 21, 2024

Consistency

Consistency is key for film, it isn’t just about a one off scene or one image. Themes, styles and characters have to go the distance, have to come and go. All the visual aspects of the movie such as costumes, props and locations need to be consistent for an almost unlimited number of different takes and cuts. To enable this, the AI world-builder needs an in-depth understanding of your story and script (see “script handling” below) and what each character represents.

You should be able to do virtual casting based on acting styles and physical traits.

These virtual actors have to stay in character and physical objects can’t have small details coming and going which break continuity. The AI also needs to have a sense of time, what time of day it is, whether a character ages, how they look at different times during the story, and it needs to have a grasp of what different eras are in one film.

Ideally AI has to have a continuity editor built in, which would save us a lot of work constantly fixing it ourselves.

But at the same time we still need that overriding control so that when we want to purposefully break continuity or have an actor go out of character, we can.

Sora is a good step in the right direction as it already recognises a need for consistency in a single scene, but can it do it over an entire ‘shoot’ with multiple scenes, and hundreds of different takes? Hours and hours of consistent principal photography that cuts together seamlessly? That would be the goal for me.

Intentionality and casting

Sora is very good at coming up with one-off eye candy at the moment, just based on a few short words, but filmmaking has to be a lot more intentional and motivated.

For that a different kind of UI is needed, and as I touched on prior there are real-time AI tools like “Krea.ai” that will help make this kind of generation more intentional:

This is insane.. damn. Look at how the Generative AI process nails the consistent light source, shadows and reflections across the whole scene here.#ai #art pic.twitter.com/7WI5zAhVzs

— Martin Nebelong (@MartinNebelong) February 21, 2024

We should have very fine control over lighting, camera movement, timing, pacing and set layout has to be part of future tools.

I think such tools will be separate apps and services, requiring a separate subscription, with a separate market with lots of third party companies adding to it… maybe an AI grading tool from Blackmagic, for example.

Besides, to have control over the exact positioning of actors and the exact placement of where scenes are shot within a larger location, as well as the ability to ask virtual actors to do another take with new instructions, which they understand and act on… will be key to making AI filmmaking work.

It is a big ask, but I’m confident it will come. Already ChatGPT works similarly with how we interact with it. It understands context, where it is in the conversation and what it said previously without having to be reminded from scratch each time. So I believe OpenAI has the technology to give us an intelligent workflow that works for cinema production.

Basically the key thing is we have to have as much control back from the AI as possible, with intitutive user interfaces. Otherwise, we will cede our artistic vision completely to the machine, and nobody wants that.

LOG mode

To be able to hand-grade our footage and apply existing LUTs sounds better to me than prompting it with words into having colour consistency between cuts and specific looks. LOG is an easier way to do this than natural language.

The finished output from the server also needs a codec selection. If it only lets us download scenes in H.265 at low bitrates, this won’t cut it for professional filmmaking of course.

Ideally, we also need to have at least 2.5K resolution to play with rather than the current trend of AI outputting approximately 720p. In the future I am sure 4K and 8K will be possible too, but for me this just isn’t a priority right now.

Camera and format selection

One thing that is a high priority though, is precise control over camera format and lenses. For stamping our own mark on AI cinematography it is vital to have a choice in simulated imaging format and lenses… be it full frame, Super 35mm, Super 16, digital or film. The right tool for the job!

Many modern features mix formats in an intentional way, like the black and white film stocks in Oppenheimer. So a mix is ok but you wouldn’t want the AI having control over such key decisions without us being able to override it. Fine, if it has an idea and you like it, you can go with it – but the buck stops with us, right?

Lenses are also crucial, and not just the choice between anamorphic and spherical, but between focal lengths, apertures and the type of rendering – vintage or a more modern rendering. The more human control over this the better, and the ability to free-roam around the virtual set with a “director’s viewfinder” will be ideal.

Audio and soundtrack production

AI will be as advanced in audio as it is visually, and it is ironic to me that the first AI movies are of a silent era! The audio side comes back to giving us full control and a choice. Going back to the casting of virtual actors for example, their voice and mannerisms in terms of audio is critical. We need choice, and we need control over it.

On the soundtrack side, I’d want to use real music by real musicians wherever possible, and the AI will probably dovetail with this by focusing on the ambient music side of the soundscape, AI foley and SFX work.

Script handling

As well as real music, I’d like to use real words. Real dialogue. I want my script to be as much defined by the hand of a human being as the cinematography, casting and costume design is.

If we are building a virtual world with AI, any decision the AI makes for us will be defined by how well it understands the source material. Perhaps it will understand the story better than us! And will make better decisions! Great from an AI engineer’s POV, but this isn’t really the point. In order for AI art to have a human component and authenticity, it cannot be entirely machine driven. When it comes to the most important decisions that go into making a film and screenplay, it has to be the imperfect human leaving their fingerprint on it creatively, which is where our own writing, our own ideas and direction come to the forefront ahead of the AI.

We need a way to discuss the script with the machine, and make sure that it understands the AI content it is generating at every step. In addition, the AI needs to have a UI that facilities access to a timeline of material already shot, so you can go back and make adjustments, big or small, and even completely reshoot a scene later or edit the script on the fly half way through a shoot.

Something which might help train our AI model on our own script is to feed our dailies and edited sequences back into it, so it’s on the same page as the human editor is.

Catering choices

Even AI gets hungry, so you better ask it what it wants for lunch before attempting on a month-long toil principal photography.

Lighting

Without light, cinematography doesn’t exist. It’s the art of light as much as it is the art of camera composition and movement. With Sora, AI has been trained on cinematography and lighting already in a kind of 3D space related to video-game engines like Unreal Engine – and it’ll only get better the more it learns, but will it understand a script well enough to light it in a way that serves the story?

Will you even want it to do this, or do you prefer to direct the cinematography entirely yourself? You could literally place your own virtual lights around the set with just a mouse and keyboard.

That to me is the ideal scenario – AI as facilitator, human as decision-maker.

The more control it can give back to us the better.

For example, determining what natural light or time of day serves the dialogue and story best at any given moment is where our own artistic talent should shine once again.

In summary, it’s all about control

tdlr:

- Intentional control over every detail when needed – lenses, movement, composition, costumes, makeup, including all the tiny details, and ability to converse with the virtual actors the AI is generating, to the extent of a real director and cinematographer on a real movie

- Consistency – especially acting, actors, faces, props, locations, camera format, grading and cinematography style

- 10bit LOG mode and finishing codec selection

- Camera and lens selection, choice of formats

- Audio generation and soundtracks

- Script and story understanding by the AI at deep level, ability to converse with it about it

- A timeline and workflow, to allow you to go back and reshoot any scene

- Ability to feed our edited sequences back into the model to train it

- Lighting engine with full control, and any preset AI rendering decisions should be based on very high quality training data, such as an understanding of real world masters of cinematography like Roger Deakins

(And I think we can do the edit ourselves can’t we!)

AI cinema is not about generating stock footage and wanging it together in Premiere, it might be at first, but looking to the future it is much more than that. MUCH more.

AI is going to be about augmenting our vision and talent and goals as filmmakers.

It is about giving back control, not about doing everything for us from a tiny seed of an idea.

Artificial general intelligence (AGI) will be able to do this, but where’s the fun in that? Where’s the human involvement?

Furthermore, other humans want to see art directed by the hand of another human, not a robot. And existing film cast and crew need jobs, not to have them completely vanquished.

I see AI filmmaking as akin to video game development and CGI, where Unreal Engine can be used as a tool to paint a virtual universe, one in which a human being has made 90% of the key decisions.

This is far more satisfying and creatively enjoyable route that professional AI can take. Sure, there will be stripped down, simplified consumer versions too. I am not so much interested in that though when it comes to cinema.

A film director makes hundreds of decisions every day, big and small, and lives in the world they’re creating for months, even years. I hope it will be the same with AI filmmaking, so that each film lives or dies by the human vision and skill fed into the machine. A lot of the key roles on real films need to find their place in AI production – costume designers should still design consumes, makeup artists should apply virtual makeup, casting directors should define the cast, directors should direct, cinematographers should light.

AI has the power to remove ALL the usual real world barriers to shooting. Gone will be rewriting scenes as a location wasn’t available or the budget didn’t stretch far enough. No more limiting the scope of your ideas or story because you have a limited budget, can’t do CGI, or have a limited crew and cast of 5 people.

Of course there are the highly unethical and problematic areas of AI filmmaking too. The job losses in real filmmaking come from the fact that one person can now do X different roles at once with 1000% more productivity than before, all from a desk at home. The grass roots of the film industry in terms of nearly all lower-end paid roles, will change drastically. It will be even harder to go out in the real world, blissfully ignorant of AI and get a job the old way on a real world production.

The other controversial area will be the guardrails of good taste imposed on the tools by corporations like Microsoft, and the politically correct bias of corporate AI systems which are there to avoid illegal content, controversy, bad PR and to expose AI investors to less legal risk. I understand why the guardrails are needed but they WILL arbitrarily limit the characters and stories you’re allowed to tell through most AI tools. It might indeed be too safe and derivative.

However, once this groundbreaking technology matures and there are open source versions equally as capable as the best closed source AI like Sora, we can take the guardrails off for creative purposes like a bunch of punch drunk pirates. Unfortunately this will lead to people making all sorts of illegal content, deep fakes, copyright infringement and the virtual placement of big name actors into AI content without their consent. So with great power, comes great responsibility.

Indeed, I think the conflict between open and closed source AI will be one of the most controversial aspects of the brave new world of AI filmmaking, and the second one will be that of who makes the chips.